Docker Command Line Example

With this example, we are going to demonstrate Docker, Architecture of Docker, Virtualization techniques, Docker Registries and Docker Command Line Interface (CLI).

1. What is Docker?

Docker is a platform used to develop, deploy, and run applications by using containers. Docker consists of:

- Docker Engine – lightweight and powerful open source containerization technology combined with a workflow for building and containerizing your applications.

- Docker Hub – our SaaS service for sharing and managing your application stacks.

Table Of Contents

2. Why Docker?

Faster delivery of your applications

- Developers care about their applications inside containers while System administrator and Operations can work on running the container in your deployment. This separation of duties streamlines and simplifies the management and deployment of code.

- Easy to build new containers, enable rapid iteration of your applications, and increase the visibility of changes. This helps everyone in your organization understand how an application works and how it is built.

- Docker containers are lightweight and fast. Reduce the cycle time of development, testing, and deployment.

Deploy and scale more easily

- Docker containers run (almost) everywhere. You can deploy containers on desktops, physical servers, virtual machines, into data centers, and up to public and private clouds.

- Since Docker runs on so many platforms, it’s easy to move your applications around. You can easily move an application from a testing environment into the cloud and back whenever you need.

- Docker’s lightweight containers also make scaling up and down fast and easy. You can quickly launch more containers when needed and then shut them down easily when they’re no longer needed.

Get higher density and run more workloads

- Docker containers don’t need a hypervisor, so you can pack more of them onto your hosts. This means you get more value out of every server and can potentially reduce what you spend on equipment and licenses.

Faster deployment makes for easier management

- As Docker speeds up your workflow, it gets easier to make lots of small changes instead of huge, big bang updates. Smaller changes mean reduced risk and more uptime.

3. Docker Platform

Docker is an open platform for developing, shipping, and running applications. Docker enables you to separate your applications from your infrastructure so you can deliver software quickly. With Docker, you can manage your infrastructure in the same ways you manage your applications. By taking advantage of Docker’s methodologies for shipping, testing, and deploying code quickly, you can significantly reduce the delay between writing code and running it in production.

Docker provides the ability to package and run an application in a loosely isolated environment called a container. The isolation and security allow you to run many containers simultaneously on a given host. Containers are lightweight because they don’t need the extra load of a hypervisor, but run directly within the host machine’s kernel. This means you can run more containers on a given hardware combination than if you were using virtual machines. You can even run Docker containers within host machines that are actually virtual machines!.

Docker provides tooling and a platform to manage the lifecycle of your containers:

- Develop your application and its supporting components using containers.

- The container becomes the unit for distributing and testing your application.

- When you’re ready, deploy your application into your production environment, as a container or an orchestrated service. This works the same whether your production environment is a local data center, a cloud provider, or a hybrid of the two.

3.1 Docker Engine

Docker Engine is a client-server application with these major components:

- A server which is a type of long-running program called a daemon process (the

dockerdcommand). - A REST API which specifies interfaces that programs can use to talk to the daemon and instruct it what to do.

- A command line interface (CLI) client (the

dockercommand).

The CLI uses the Docker REST API to control or interact with the Docker daemon through scripting or direct CLI commands. Many other Docker applications use the underlying API and CLI.

The daemon creates and manages Docker objects, such as Images, Containers, Networks, and Volumes.

4. What can I use Docker for?

Fast, consistent delivery of your applications

Docker streamlines the development lifecycle by allowing developers to work in standardized environments using local containers which provide your applications and services. Containers are great for continuous integration and continuous delivery (CI/CD) workflows.

Consider the following example scenario:

- Your developers write code locally and share their work with their colleagues using Docker containers.

- They use Docker to push their applications into a test environment and execute automated and manual tests.

- When developers find bugs, they can fix them in the development environment and redeploy them to the test environment for testing and validation.

- When testing is complete, getting the fix to the customer is as simple as pushing the updated image to the production environment.

Responsive deployment and scaling

Docker’s container-based platform allows for highly portable workloads. Docker containers can run on a developer’s local laptop, on physical or virtual machines in a data center, on cloud providers, or in a mixture of environments.

Docker’s portability and lightweight nature also make it easy to dynamically manage workloads, scaling up or tearing down applications and services as business needs dictate, in near real time.

Running more workloads on the same hardware

Docker is lightweight and fast. It provides a viable, cost-effective alternative to hypervisor-based virtual machines, so you can use more of your compute capacity to achieve your business goals. Docker is perfect for high density environments and for small and medium deployments where you need to do more with fewer resources.

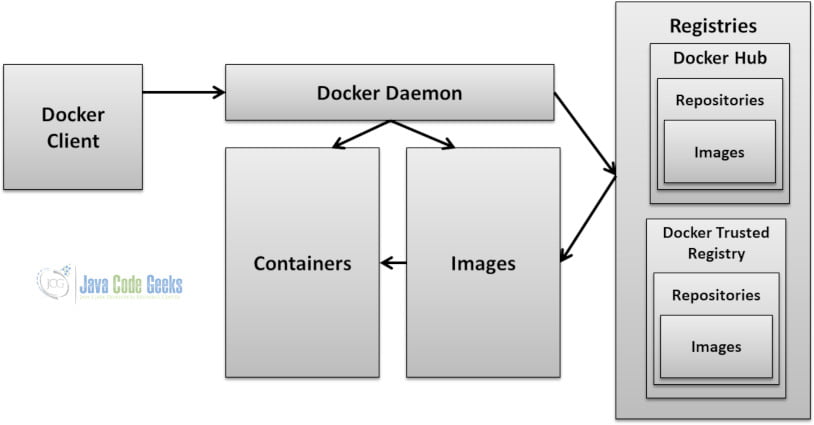

5. Docker architecture

Docker uses a client-server architecture. The Docker client talks to the Docker daemon, which does the heavy lifting of building, running, and distributing your Docker containers. The Docker client and daemon can run on the same system, or you can connect a Docker client to a remote Docker daemon. The Docker client and daemon communicate using a REST API, over UNIX sockets or a network interface.

- Client / Server Architecture

- Client takes User Inputs and send them to the Daemon

- Daemon builds, runs and distributes Containers

- Client and Daemon can run on the same host or on different hosts

- CLI Client and GUI Client (Kitematic)

5.1 Docker Daemon

The Docker daemon (dockerd) listens for Docker API requests and manages Docker objects such as images, containers, networks, and volumes. A daemon can also communicate with other daemons to manage Docker services.

5.2 Docker client

The Docker client (docker) is the primary way that many Docker users interact with Docker. When you use commands such as docker run, the client sends these commands to dockerd, which carries them out. The docker command uses the Docker API. The Docker client can communicate with more than one daemon.

Kitematic is also another docker client. Kitematic, the Docker GUI, runs on Mac and Windows operating systems. Kitematic is compatible with Docker Desktop and can be used as a graphical interface to manage your Docker containers.

5.3 Docker Registries

A Docker registry stores Docker images. Docker Hub is a public registry that anyone can use, and Docker is configured to look for images on Docker Hub by default. You can even run your own private registry.

Docker Enterprise Edition (Docker EE) is the enterprise container platform from Docker Inc to be used across the entire software supply chain. It is a fully-integrated solution for container-based application development, deployment, and management. With integrated end-to-end security, Docker EE enables application portability by abstracting your infrastructure so that applications can move seamlessly from development to production.

Docker EE Standard (formerly known as Docker Datacenter) and Docker EE Advanced include the following components:

- Docker EE Basic (formerly “Commercially Supported” or “CS” Engine), the commercially supported Docker container runtime and platform

- Universal Control Plane (UCP), the web-based, unified cluster and application management solution

- Docker Trusted Registry (DTR), a resilient and secure image management repository

Together they provide an integrated solution with the following design goals:

- Agility — the Docker API is used to interface with the platform so that operational features do not slow down application delivery

- Portability — the platform abstracts details of the infrastructure for applications

- Control — the environment is secure by default, provides robust access control, and logging of all operations

When you use the docker pull or docker run commands, the required images are pulled from your configured registry. When you use the docker push command, your image is pushed to your configured registry.

5.4 Docker Objects

When you use Docker, you are creating and using images, containers, networks, volumes, plugins, and other objects.

5.4.1 Images

An image is a read-only template with instructions for creating a Docker container. Often, an image is based on another image, with some additional customization. For example, you may build an image which is based on the ubuntu image, but installs the Apache web server and your application, as well as the configuration details needed to make your application run.

You might create your own images or you might only use those created by others and published in a registry. To build your own image, you create a Dockerfile with a simple syntax for defining the steps needed to create the image and run it. Each instruction in a Dockerfile creates a layer in the image. When you change the Dockerfile and rebuild the image, only those layers which have changed are rebuilt. This is part of what makes images so lightweight, small, and fast, when compared to other virtualization technologies.

5.4.2 Containers

A container is a runnable instance of an image. You can create, start, stop, move, or delete a container using the Docker API or CLI. You can connect a container to one or more networks, attach storage to it, or even create a new image based on its current state.

By default, a container is relatively well isolated from other containers and its host machine. You can control how isolated a container’s network, storage, or other underlying subsystems are from other containers or from the host machine.

A container is defined by its image as well as any configuration options you provide to it when you create or start it. When a container is removed, any changes to its state that are not stored in persistent storage disappear.

Example docker run command

The following command runs an ubuntu container, attaches interactively to your local command-line session, and runs /bin/bash.

$ docker run -i -t ubuntu /bin/bash

When you run this command, the following happens (assuming you are using the default registry configuration):

- If you do not have the

ubuntuimage locally, Docker pulls it from your configured registry, as though you had rundocker pull ubuntumanually. - Docker creates a new container, as though you had run a

docker container createcommand manually. - Docker allocates a read-write filesystem to the container, as its final layer. This allows a running container to create or modify files and directories in its local filesystem.

- Docker creates a network interface to connect the container to the default network, since you did not specify any networking options. This includes assigning an IP address to the container. By default, containers can connect to external networks using the host machine’s network connection.

- Docker starts the container and executes

/bin/bash. Because the container is running interactively and attached to your terminal (due to the-iand-tflags), you can provide input using your keyboard while the output is logged to your terminal. - When you type

exitto terminate the/bin/bashcommand, the container stops but is not removed. You can start it again or remove it.

5.4.3 Services

Services allow you to scale containers across multiple Docker daemons, which all work together as a swarm with multiple managersand workers. Each member of a swarm is a Docker daemon, and the daemons all communicate using the Docker API. A service allows you to define the desired state, such as the number of replicas of the service that must be available at any given time. By default, the service is load-balanced across all worker nodes. To the consumer, the Docker service appears to be a single application. Docker Engine supports swarm mode in Docker 1.12 and higher.

6. Benefits of Docker

- Separation of Concerns – Developers focus on building their applications inside the container. System Administrator and Operations focus on running the container on the Deployment Environment.

- Fast Development Cycle.

- Application Portability – Build in one environment, ship to another.

- Scalability – Easily spin up new containers if needed.

- Run more applications on one host machine.

7. Virtualization Techniques

The following are the various Virtualization Techniques:

7.1 Pre-Virtualization

Virtualization Techniques was not present in the legacy systems. The problems faced with legacy systems are:

- Slow

Deployment times - Huge

Costs - Wasted

Resources - Difficult

to Scale - Difficult

to Migrate

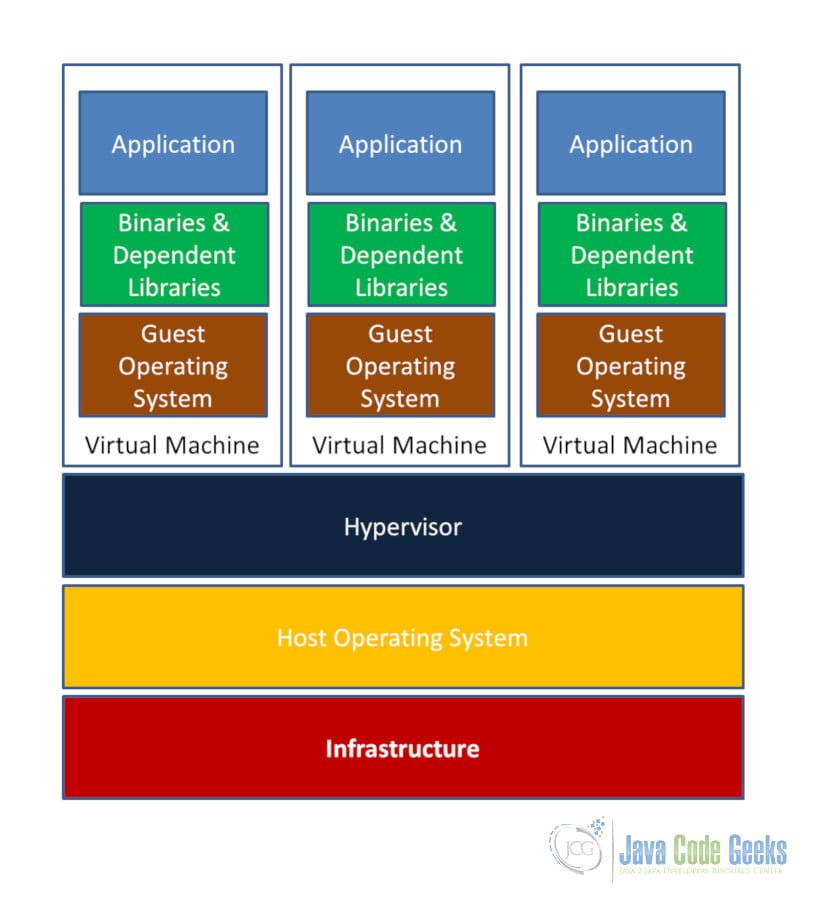

7.2 Hypervisor-based Virtualization

Hypervisor-based Virtualization has the following features:

- One Physical Server can contain multiple applications

- Each application runs in a Virtual Machine (VM)

- Each Guest OS has automated CPU, RAM, and its storage

- For load balancing, we can use some more VM for scalability, no need to buy more servers and add install them on the rack.

7.2.1 Benefits of Virtual Machines

- Better Resource Pooling – One Physical Machine divided into multiple Virtual Machines

- Easier to Scale

- Virtual Machines in the Cloud – Rapid Elasticity and Pay as you go model

7.2.2 Limitation of Virtual Machines

- Each

VM still requires CPU Allocation, Storage, RAM, An entire guest OS. - The

more VM’s you run, the more resources you need - Guest

OS means wasted resources - Application

portability not guaranteed

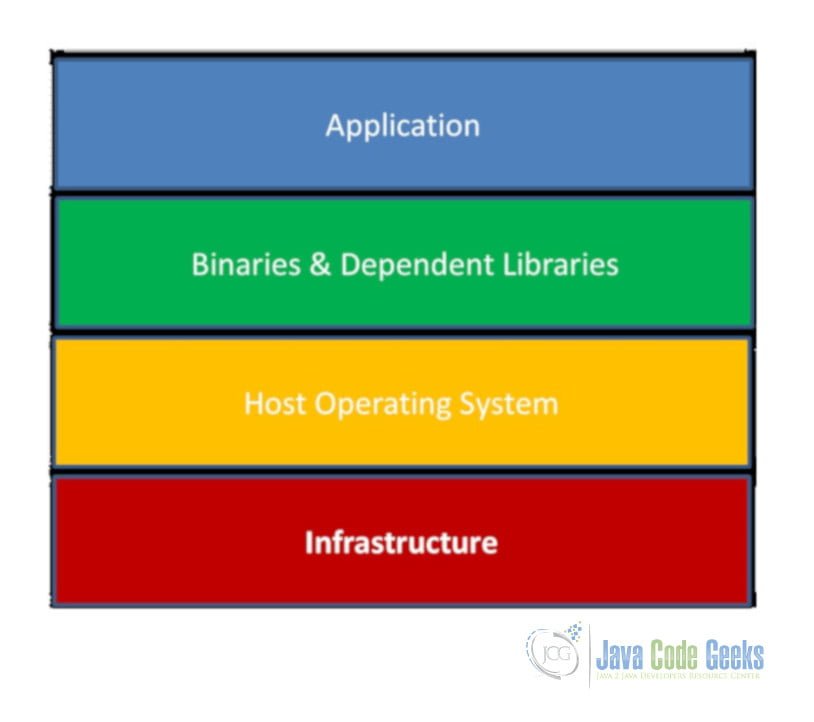

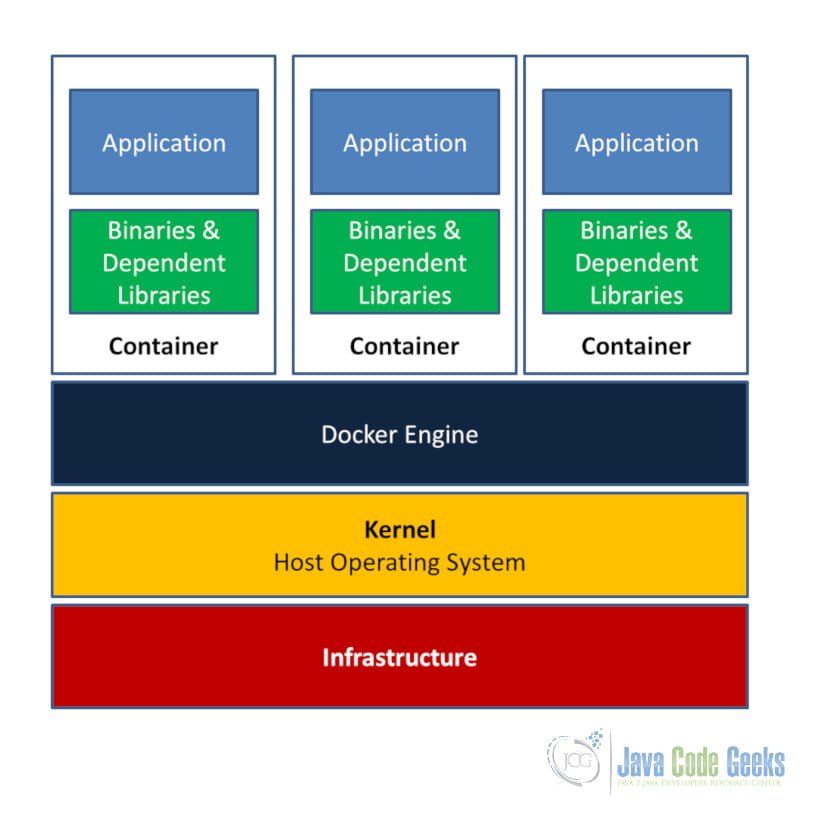

7.3. Container-based Virtualization

Container-based Virtualization uses the Kernel on the host’s Operating System to run multiple Guest Instances.

- Each Guest Instance is called a Container

- Each Container has its own Root Filesystem, Processes, Memory, Devices, Network Ports

- The server can be a Physical machine or Virtual Machine. Install OS, Container interacts with OS Kernel. It uses certain features of the kernel to create an isolated application platform. Within each container, we install our application and the libraries that application depends on.

7.3.1 Why we require Containers?

We cannot install different Java version on one machine, instead, the containers can have different Java versions and avoid conflicts. Example: Container 1 has Java 7, Container 2 has Java 8, Container 3 has Java 9 but running the same application.

It

can have more containers than VMs. Containers don’t have guest OS, There is no

need to virtualizes any hardware. File System can be much slower than VM. Spin

up fast as Process.

7.3.2 Containers Vs Virtual Machines

A container runs natively on Linux and shares the kernel of the host machine with other containers. It runs a discrete process, taking no more memory than any other executable, making it lightweight.

- Containers

are more lightweight - No

need to install Guest OS - Less

CPU, RAM, Storage Space required - More

Containers per Machine than Virtual Machines - Greater

Portability

By contrast, a virtual machine (VM) runs a full-blown “guest” operating system with virtual access to host resources through a hypervisor. In general, VMs provide an environment with more resources than most applications need.

7.3.3 Containerization

The use of Linux containers to deploy applications is called containerization. Containers are:

- Flexible: Even the most complex applications can be containerized.

- Lightweight: Containers leverage and share the host kernel.

- Interchangeable: You can deploy updates and upgrades on-the-fly.

- Portable: You can build locally, deploy to the cloud, and run anywhere.

- Scalable: You can increase and automatically distribute container replicas.

- Stackable: You can stack services vertically and on-the-fly.

Containerization makes CI/CD seamless. For example:

- applications have no system dependencies

- updates can be pushed to any part of a distributed application

- resource density can be optimized.

8. Install Docker Desktop for Windows

To download and install Docker Desktop for Windows, head to Docker Hub:

https://hub.docker.com/editions/community/docker-ce-desktop-windows

Docker Desktop for Windows

is Docker designed to run on Windows 10. It is a native Windows application

that provides an easy-to-use development environment for building, shipping,

and running dockerized apps. Docker Desktop for Windows uses Windows-native

Hyper-V virtualization and networking and is the fastest and most reliable way to

develop Docker apps on Windows. Docker Desktop for Windows supports running

both Linux and Windows Docker containers.

Right Click on Windows Icon-> App and Features -> Click on Programs and Features -> Turn Windows Features on or off – Hyper V is enabled (Checked)

Click on Docker Desktop

Icon on your desktop

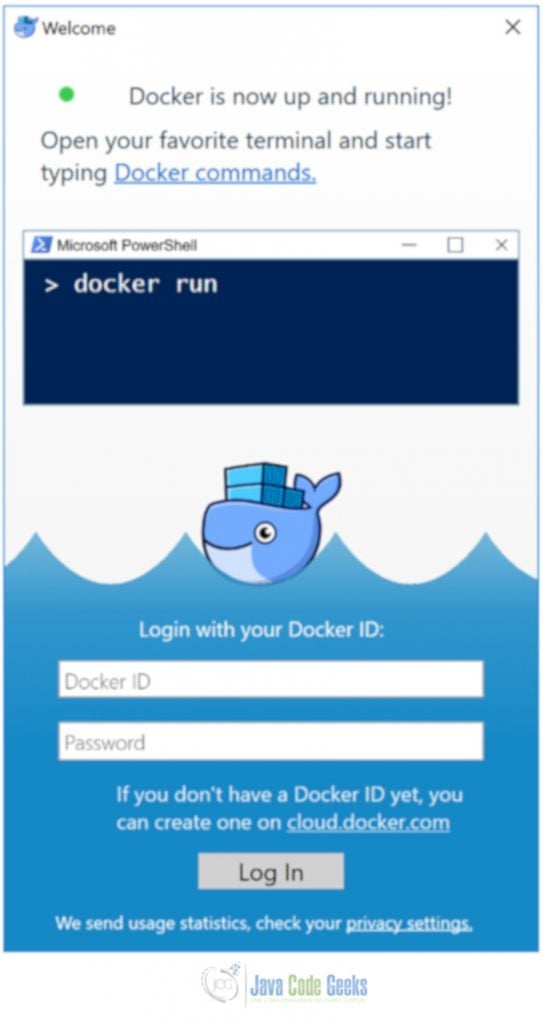

The below screen shows that Docker Desktop is starting:

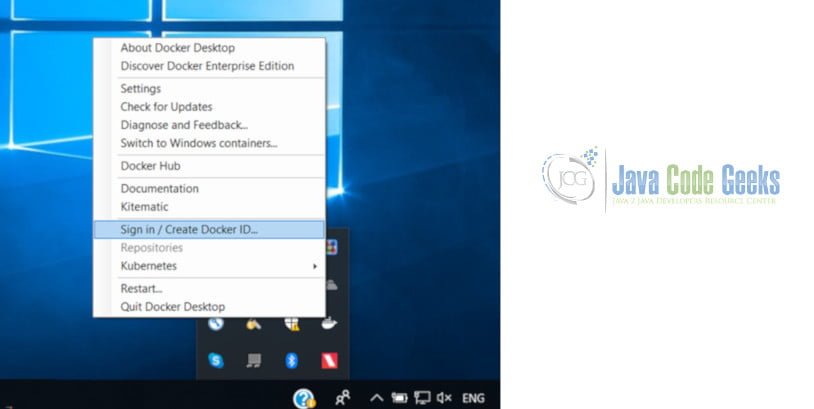

The below screen shows that the Docker Desktop is running:

The below screen shows that options available on right clicking on whale symbol:

When the whale in the

status bar stays steady, Docker is up-and-running, and accessible from any

terminal window.

If the whale is hidden in

the Notifications area, click the up arrow on the taskbar to show it.

If you just installed the

app, you also get a popup success message with suggested next steps, and a link

to this documentation.

The below screen shows that the Docker Desktop is up and running. It is prompting Docker Id and Password to connect to Docker Hub:

When initialization is complete,

select About Docker from the Notifications area icon to verify

that you have the latest version.

Congratulations! You are up and running with Docker Desktop for Windows.

Docker is a full

development platform for creating containerized apps, and Docker Desktop for

Windows is the best way to get started with Docker on Windows.

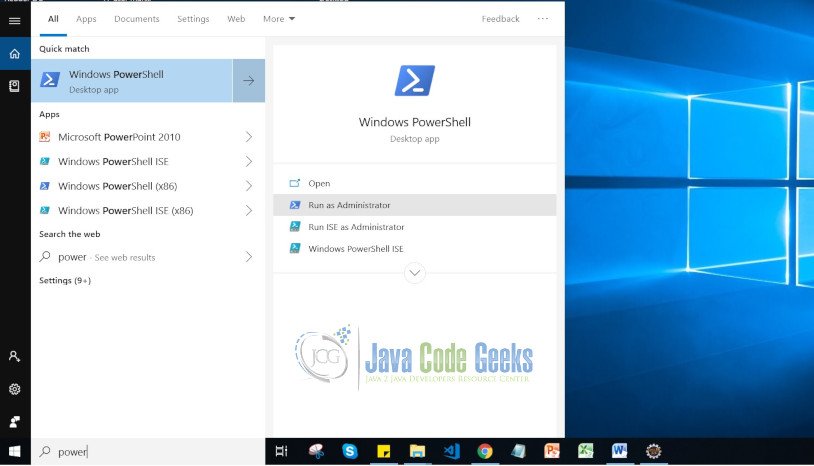

The below screen shows that Windows PowerShell option which will be used as Docker Command Line Client:

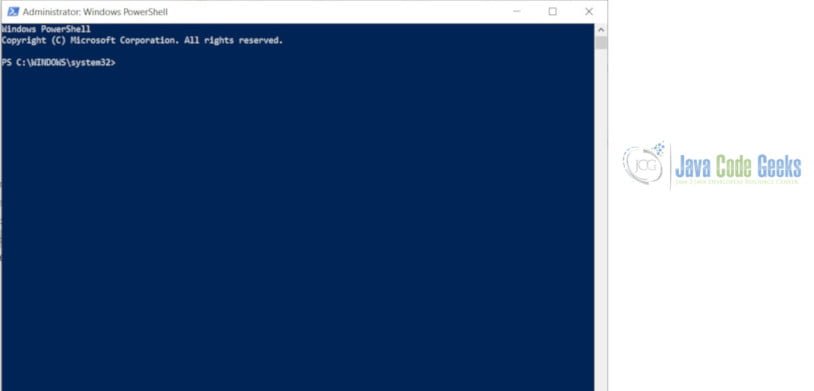

The below screen shows that it is ready to accept Docker CLI Commands:

9. Docker Command Line Interface

This is the Docker program that users interact with the docker daemon.

9.1 Docker Commands

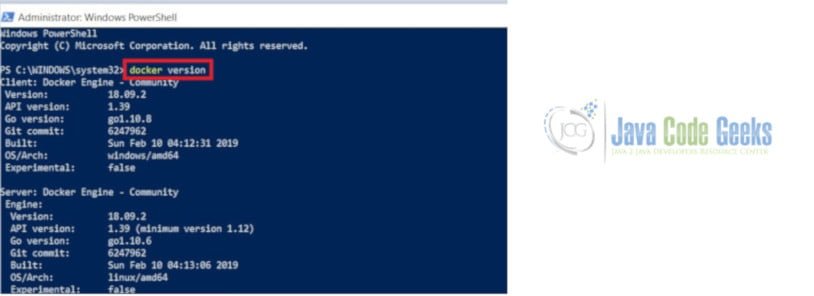

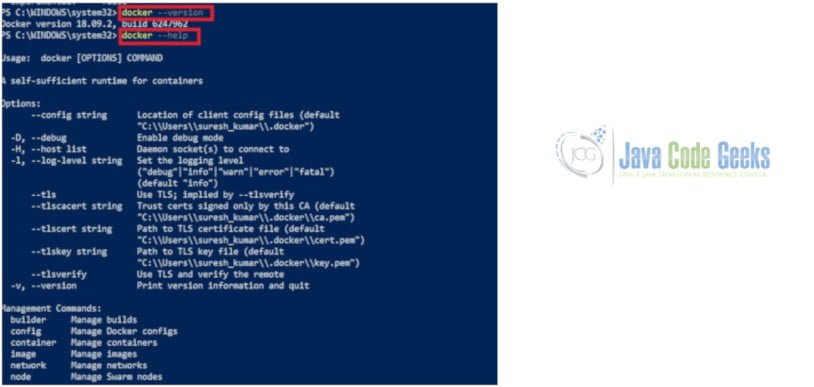

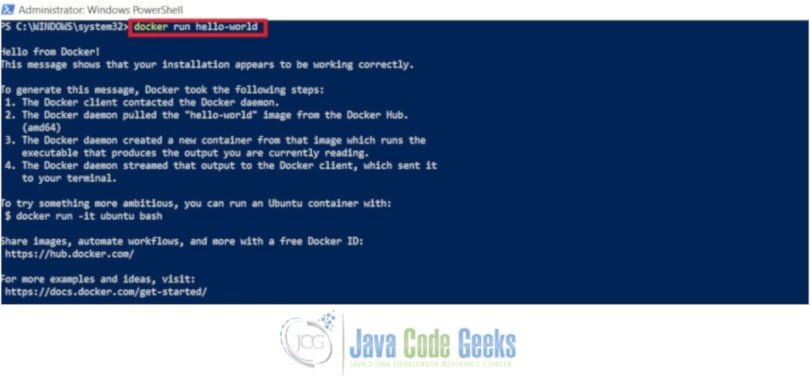

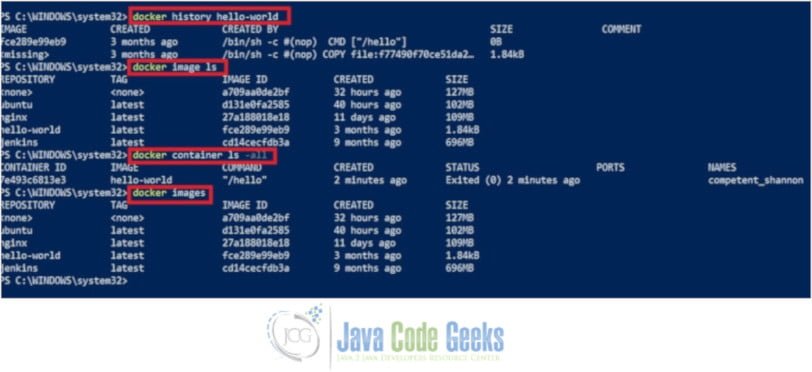

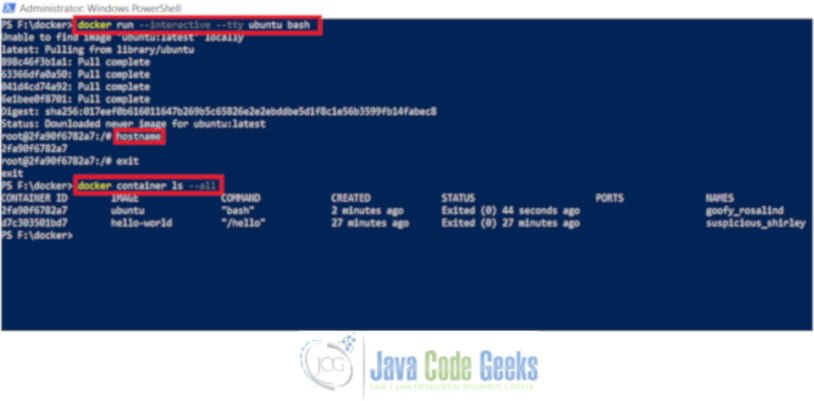

The follow screen shows that the outputs of various Docker Commands typed by the User at the Docker Command Line Interface:

docker version – Show the Docker version information

docker –version – It ensures that you have a supported version of Docker.

docker –help – It displays information about how to use a command.

docker run hello-world – Executes Docker Image.

docker history hello-world – Show the history of an image

docker image ls – List Images

docker container ls -all – List all the Containers

docker images – List Images

docker run –interactive –tty ubuntu bash – Pull an image of the Ubuntu OS and run an interactive terminal inside the spawned container

At the root # prompt, check the hostname of the container. The hostname is assigned as the container ID (and is also used in the prompt). Exit the shell with the exit command (which also stops the container).

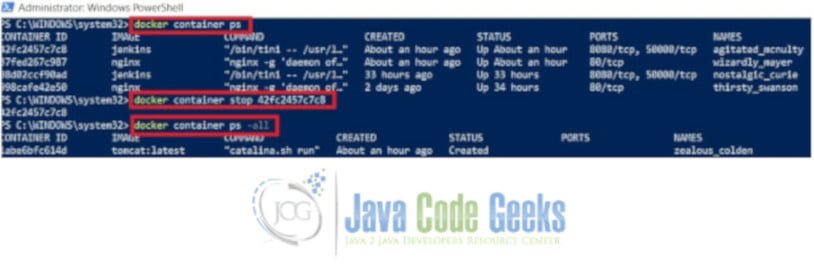

docker container ps – List running Containers

docker container stop 42fc2457c7c8 – Stops a running container using container id

docker container ps -a or-all – Show all Running Containers

docker container ps -a or-all – Show all Running Containers

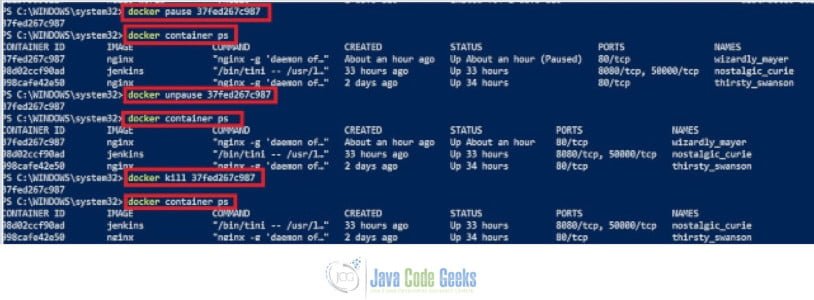

docker pause 37fed267c987 – suspends all processes in the specified container.

docker unpause 37fed267c987 – un-suspends all processes in the specified container.

docker kill 37fed267c987 – Kills the specified running containers

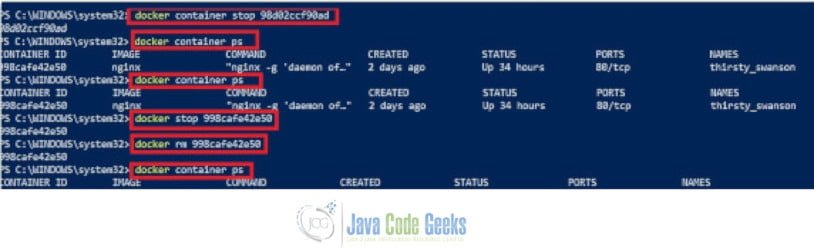

docker container stop 98d02ccf90ad – Stop s the specified running container.

docker stop 98d02ccf90ad – Stops the specified running container.

docker rm 98d02ccf90ad – Remove the specified containers

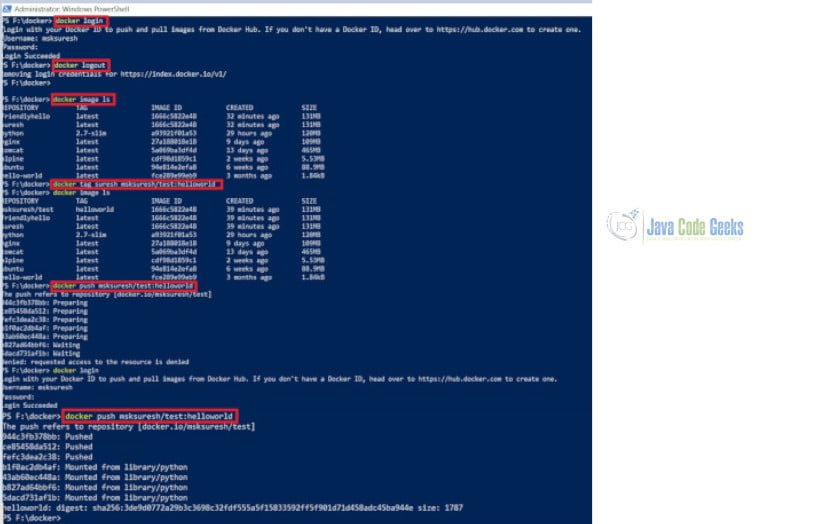

docker login – Log in to a Docker registry

docker logout – Log out from a Docker registry

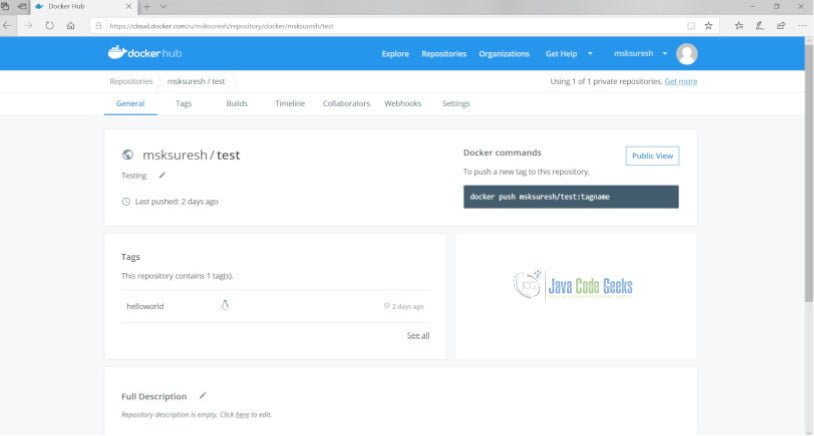

docker tag suresh msksuresh/test:helloworld – Create a tag TARGET_IMAGE that refers to SOURCE_IMAGE

docker push msksuresh/test:helloworld – Push your image to Docker Hub

You can find the image helloworld has been pushed to docker hub in the above screen.

9.2 Dockerfile

Docker can build images automatically by reading the instructions from a Dockerfile. A Dockerfile is a text document that contains all the commands a user could call on the command line to assemble an image. Using docker build, users can create an automated build that executes several command-line instructions in succession.

The docker build command builds an image from a Dockerfile and a context. The build’s context is the set of files at a specified location PATH or URL. The PATH is a directory on your local filesystem. The URL is a Git repository location.

A context is processed recursively. So, a PATH includes any subdirectories and the URL includes the repository and its submodules.

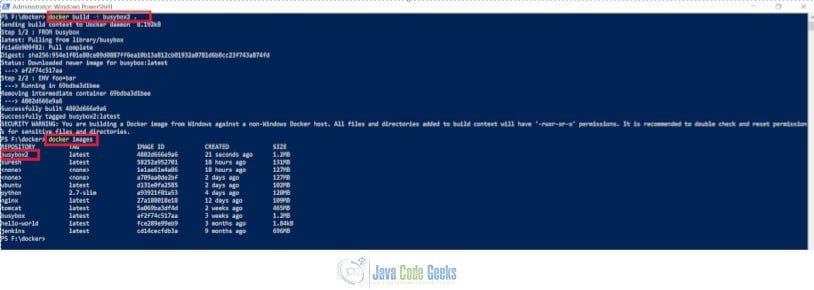

Create a file (dockerfile) with the following contents:

FROM busybox

ENV foo=bar

The following screen shows that it builds an image from a dockerfile:

docker build -t busybox2 – build a new image called busybox2

docker images – List all the Images

10. Docker CLI Commands

The following are Docker CLI Commands for your reference:

- docker attach – Attach local standard input, output, and error streams to a running container

- docker build – Build an image from a Dockerfile

- docker builder – Manage builds

- docker checkpoint – Manage checkpoints

- docker commit – Create a new image from a container’s changes

- docker config – Manage Docker configs

- docker container – Manage containers

- docker cp – Copy files/folders between a container and the local filesystem

- docker create – Create a new container

- docker deploy – Deploy a new stack or update an existing stack

- docker diff – Inspect changes to files or directories on a container’s filesystem

- docker engine – Manage the docker engine

- docker events – Get real time events from the server

- docker exec – Run a command in a running container

- docker export – Export a container’s filesystem as a tar archive

- docker history – Show the history of an image

- docker image – Manage images

- docker images – List images

- docker import – Import the contents from a tarball to create a filesystem image

- docker info – Display system-wide information

- docker inspect – Return low-level information on Docker objects

- docker kill – Kill one or more running containers

- docker load – Load an image from a tar archive or STDIN

- docker login – Log in to a Docker registry

- docker logout – Log out from a Docker registry

- docker logs – Fetch the logs of a container

- docker manifest – Manage Docker image manifests and manifest lists

- docker network – Manage networks

- docker node – Manage Swarm nodes

- docker pause – Pause all processes within one or more containers

- docker plugin – Manage plugins

- docker port – List port mappings or a specific mapping for the container

- docker ps – List containers

- docker pull – Pull an image or a repository from a registry

- docker push – Push an image or a repository to a registry

- docker rename – Rename a container

- docker restart – Restart one or more containers

- docker rm – Remove one or more containers

- docker rmi – Remove one or more images

- docker run – Run a command in a new container

- docker save – Save one or more images to a tar archive (streamed to STDOUT by default)

- docker search – Search the Docker Hub for images

- docker secret – Manage Docker secrets

- docker service – Manage services

- docker stack – Manage Docker stacks

- docker start – Start one or more stopped containers

- docker stats – Display a live stream of container(s) resource usage statistics

- docker stop – Stop one or more running containers

- docker swarm – Manage Swarm

- docker system – Manage Docker

- docker tag – Create a tag TARGET_IMAGE that refers to SOURCE_IMAGE

- docker top – Display the running processes of a container

- docker trust – Manage trust in Docker images

- docker unpause – Unpause all processes within one or more containers

- docker update – Update configuration of one or more containers

- docker version – Show the Docker version information

- docker volume – Manage volumes

- docker wait – Block until one or more containers stop, then print their exit codes

11. Summary

Docker provides the ability to package and run an application in a loosely isolated environment called a container. The isolation and security allow you to run many containers simultaneously on a given host. Containers are lightweight because they don’t need the extra load of a hypervisor, but run directly within the host machine’s kernel. This means you can run more containers on a given hardware combination than if you were using virtual machines. You can even run Docker containers within host machines that are actually virtual machines!

In this Docker Command Line Example, We have learned about the architecture of Docker, Benefits of Docker, Types of Virtualization, Docker Registries, Docker Command Line Interface and Docker Commands.