Apache Hadoop Knox Tutorial

In this tutorial, we will learn about Apache Knox. Knox provides the REST API Gateway for the Apache Hadoop Ecosystem. We will go through the basics of Apache Knox in the following sections.

1. Introduction

Apache Knox is the open source project under Apache Software Foundation similar to most other Hadoop Ecosystem projects. It provides a REST API Gateway for interacting with Apache Hadoop clusters. When using Knox Gateway with the Hadoop cluster it will be the single access point for all REST interactions with the clusters.

Following are the basic functionality provided by Knox:

- Authentication (LDAP and Active Directory Authentication Provider)

- Federation/SSO (HTTP Header Based Identity Federation)

- Authorization (Service Level Authorization)

- Auditing

The Knox API Gateway is designed as a reverse proxy with consideration for pluggability in the areas of policy enforcement, through providers and the backend services for which it proxies requests.

Policy enforcement ranges from authentication/federation, authorization, audit, dispatch, hostmapping and content rewrite rules. Apache Hadoop clusters which uses Apache Knox has its set of REST APIs represented by a single cluster specific application context path. This allows the Knox Gateway to both protect multiple clusters and present the REST API consumer with a single endpoint for access to all of the services required, across the multiple clusters.

Apache Knox is already compatible with a lot of Apache Ecosystem projects and provides integration with the following services:

- Ambari

- Hadoop Distributed File System

- Templeton (HCatalog)

- Stargate (HBase)

- Oozie

- Hive/JDBC

- Yarn RM

- Storm

Besides this Apache Knox also supports following Apache Hadoop UIs:

- Name Node UI

- Job History UI

- Oozie UI

- HBase UI

- Yarn UI

- Spark UI

- Ambari UI

- Ranger Admin Console

2. Apache Knox Setup

In this section, we will go through the basic setup of Apache Knox. Follow the steps in the given order to setup Knox in the Hadoop Cluster.

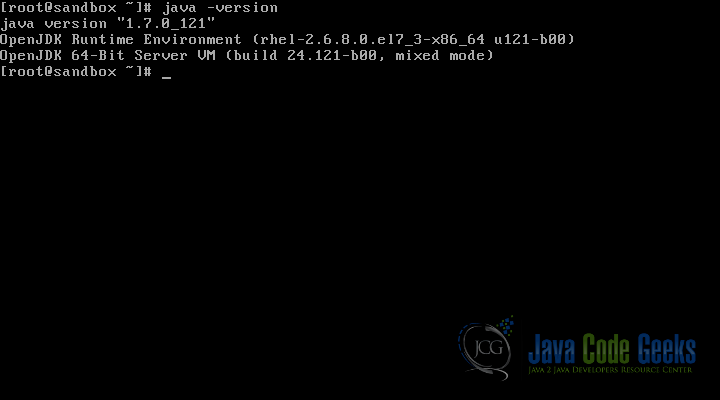

2.1 Java Version

The very first requirement of Apache Knox is Java. It should be installed in the system and it should be version 1.6 or later. Use the following command to check the version of Java installed:

java -version

As you can see in the screenshot above, we have version 1.7 installed which satisfies the requirement of Apache Knox.

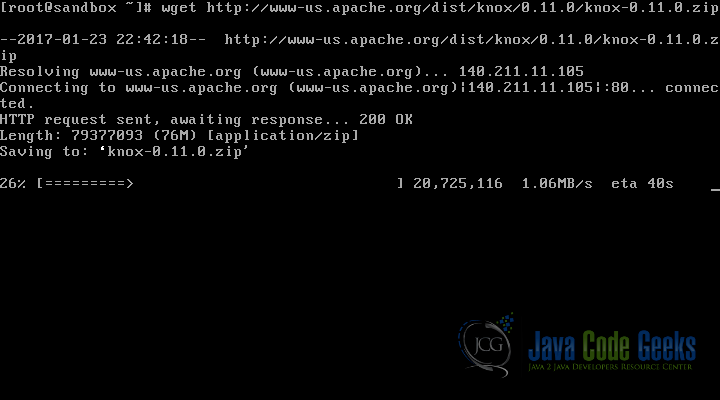

2.2 Download Apache Knox

The next step is to download the latest version of Apache Knox. At the time of writing the latest version is 0.11.0. You can download Knox from one of the Apache Mirrors

You can download the binary package if you just want to use Apache Knox without going into its source. Binary package of version 0.11.0 is available here

If you are interested in the source code of Apache Knox then you can download the source from Apache Mirror here

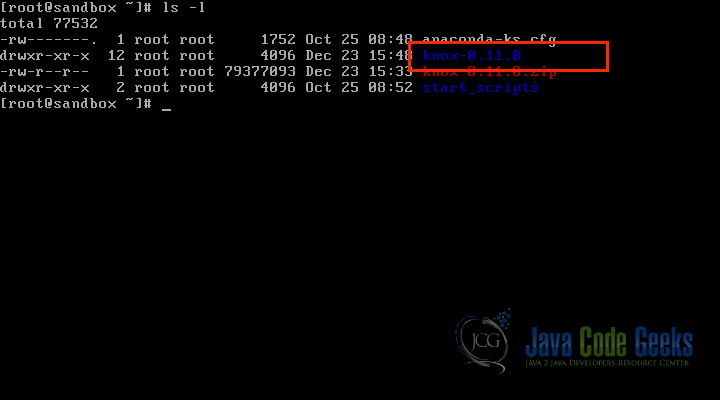

2.3 Unzipping the Knox zip

Here for the tutorial, we downloaded the binary package. Once we have the Apache Knox package downloaded, it is time to unzip it. Following command can be used for that:

unzip knox-0.11.0.zip

It will unpack the Knox binary in the same directory, as shown in the screenshot below:

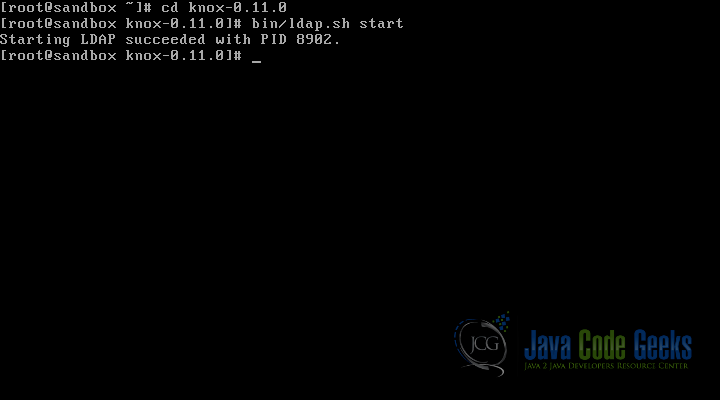

2.4 Start LDAP

Knox package comes with an LDAP server. We will use this LDAP server for the tutorial.

User the following command to start the LDAP server:

cd knox-0.11.0 bin/ldap.sh start

As shown in the screenshot above, make sure the server is started and a PID is assigned to it. For example, PID 8902 is assigned in the screenshot.

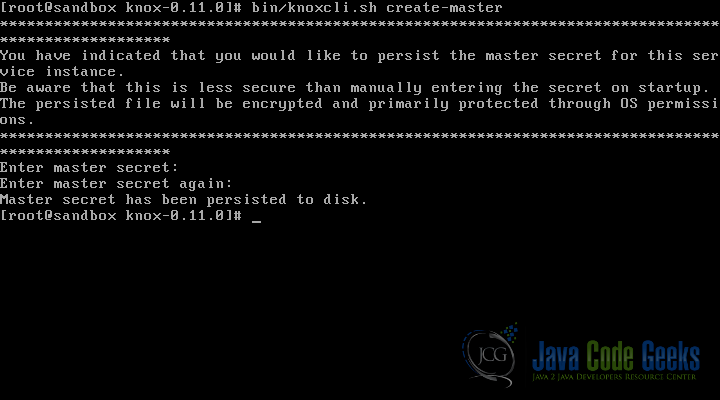

2.5 Creating Master Secret

Now it is time to create the master secret for Apache Knox. Use the following command to run the master secret sh script:

bin/knoxcli.sh create-master

As shown in the screenshot above, it will ask the user to enter the master-secret. Once the master secret is confirmed with the second try it is persistently stored on the disk.

2.6 Starting Apache Knox

Apache Knox package provides the sh scripts to start, stop or clean the knox installation. The server will automatically discover the persisted master secret during start up and will complete the setup process. Following are the commands which may come handy:

Starting the Knox gateway:

bin/gateway.sh start

Stopping the gateway

bin/gateway.sh stop

If the gateway crashes or stops abruptly leaving the PID file behind clean command can be used for the cleanup. It will delete the PID file and also cleans up the logs.

bin/gateway.sh clean

If the above steps are successful, we will have Apache Knox implemented on the Hadoop Cluster. I would highly recommend to go through the knox-0.11.0 package and to understand the directory structure of Knox, it can come handy during debugging or troubleshooting.

3. Summary

In this quick tutorial, we started with the introduction of Apache Knox and what are the components of the Hadoop Ecosystem which it covers and support. We went through the installation process of the Apache Knox in the subsequent sections of the tutorial.

I hope this gives a good understanding of the basics of Apache Knox and how to get started with it. For in-depth and detailed understanding of Apache Knox, you can visit the Developer’s Guide on the Apache Website.