Develop a Web App with Python Flask and AWS S3

Hello in this tutorial, I’ll show you how to create a web application in python using the Flask framework and AWS S3. The web application will interact with AWS S3 to show the upload and download file functionality. Flask is a micro-framework as it does not require any particular tools or libraries. It has no database layer, form validations, or any other third-party library that provides common functions.

1. Introduction

AWS Storage Service or simply known as AWS S3 is an online storage facility for the users. It cheap, easy to set up and the user only pays for what they utilize. It offers,

- To host static web content and data or even the dynamic pages

- Data storage for analytics

- Backup and archival of data

- Disaster recovery solutions

1.1 S3 Bucket

Amazon S3 Bucket has two primary entities i.e. Object and Bucket, where objects are stored inside the buckets. It provides high availability and durability solutions by replicating the data of one bucket in multiple data centers. Each AWS account offers 100 buckets as free, however, this count can be increased on submitting a request to the support center. Amazon S3 features –

- Each object in a bucket is given a unique id

- S3 allows a developer to upload/delete or read an object via the REST API

- S3 offers two read-after-write and eventual consistency models to ensure that every change command committed to a system should be visible to all the participants

- Objects stored in a bucket never leave it’s location unless the user transfer them out

- Objects can be made private or public and rights can be granted to specific users

1.2 S3 Object

S3 Object(s) are the basic entities stored in the Amazon S3 bucket and are a simple key-value store. An object in S3 consists of the following –

- Key – Represents the name assigned to the object

- VersionId – Represents the key and version ID uniquely identifies an object. It is a string that S3 generates we add an object to the bucket

- Value – The content which we are storing in the bucket and can range up to 5 TB in size

- Metadata – Represents the name-value pairs with which we can store information regarding the object

- Access Control Information – Through this we control access to the objects stored in Amazon S3

2. Setting up Python and important libraries

To start with this tutorial we will need to install Python and set up some of the python libraries. Let us go ahead and install them one by one.

2.1 Setting up Python

If someone needs to go through the Python installation on Windows, please watch this link. You can download the Python from this link.

2.2 Setting up Flask library

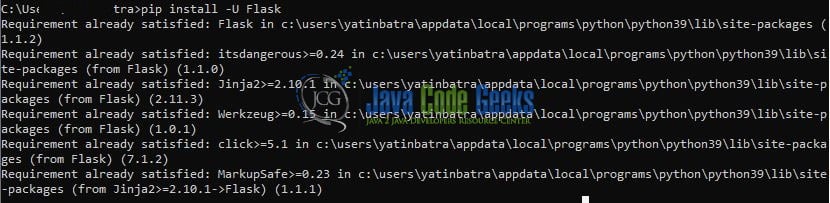

Once the python is successfully installed on your system you can install the Flask using a simple pip command. You can fire the below command from the command prompt and it will successfully download the module from pypi.org and install it.

Installation command

pip install -U Flask

Once you trigger this command the installation will be started as shown in Fig. 1.

2.3 Setting up Boto3 library

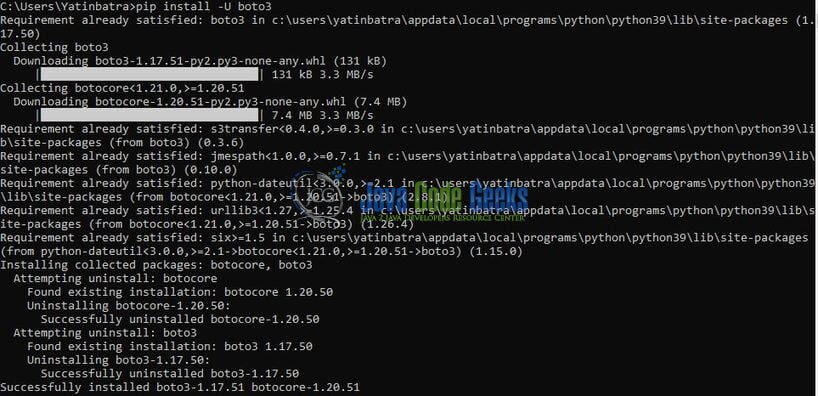

To install the Boto3 library you can fire the below command from the command prompt and it will successfully download the module from pypi.org and install it.

Installation command

pip install -U boto3

Once you trigger this command the installation will be started as shown in Fig. 2.

3. Application Pre-requisites

3.1 Creating an IAM User

Let us create an AWS CLI IAM user. If someone needs to go through the process of creating an IAM user and attaching the Administrator Access policy, please watch this video. You are free to choose the S3 Full Access policy if you want to allow access to the CLI user for the AWS S3 service only.

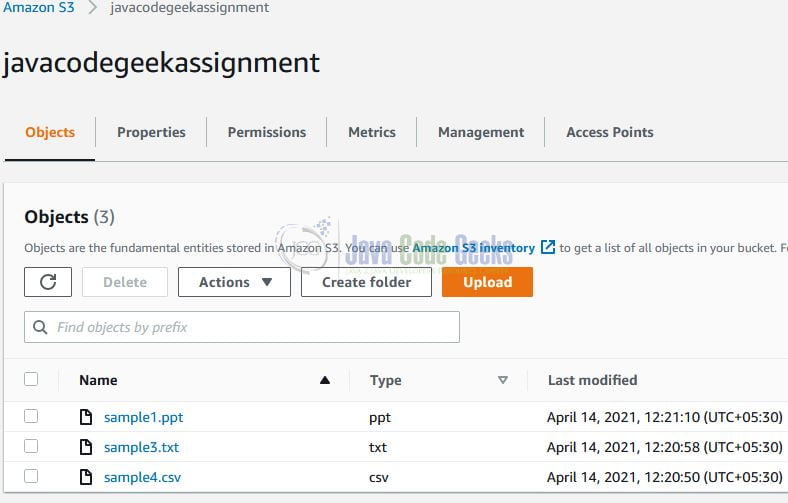

3.2 Creating an S3 Bucket

Let us create an S3 bucket that will be used to upload the files and download the files from. For this tutorial, we will create an S3 bucket named – javacodegeekassignment. If everything goes well the S3 bucket will be created.

4. Creating an Application

Let us dive in with the programming stuff now. I am using JetBrains PyCharm as my preferred IDE. You’re free to choose the IDE of your choice.

4.1 Configuring CLI user

Add the AWS CLI user details such as aws_access_key_id, aws_secret_access_key, and region to the AWS credentials file for performing these operations. In our case, we have selected the default region as ap-south-1 wherein we will perform these operations. You are free to change these details as per your configuration setup.

.credentials

[default] aws_access_key_id = YOUR_AWS_ACCESS_KEY_ID aws_secret_access_key = YOUR_AWS_SECRET_ACCESS_KEY

4.2 Creating a Configuration file

Add the following code to the python script containing the configuration wherein we will use the CLI credentials from the credentials file to create a resource client for interaction with S3. The python script contains the create_s3_client() method that returns the client.

config.py

import boto3

S3 = 's3'

# creating the aws s3 client

# as a standard practice we are not hard-coding the aws access key id and secret key credentials here

# the credentials are defined in the .credentials file

def create_s3_client():

return boto3.client(S3)

4.3 Creating an Implementation file

Adding the following code to the python script containing the functions to upload, download, and list files from the S3 bucket. For brevity,

- We are not checking if the bucket exists or not

- We are assuming that either the bucket already exists or is newly created as shown in Section 3.2

s3demo.py

from botocore.exceptions import ClientError

from config import create_s3_client

# bucket name

# note - we are considering that the bucket already exists in the aws environment.

# we can have a check_bucket function() to check whether the bucket exists or not

BUCKET = 'YOUR_BUCKET_NAME'

# s3 client instance to perform the s3 related operations

s3_client = create_s3_client()

# get the list of files from s3 bucket

def list_files():

contents = []

try:

response = s3_client.list_objects_v2(Bucket=BUCKET)

if 'Contents' in response:

for item in response['Contents']:

# print(item)

contents.append(item)

else:

print('Bucket is empty')

except ClientError as e:

print(e)

return contents

# upload a file to s3 bucket

def upload_file(file_location):

try:

path, name = file_location.split('/')

response = s3_client.upload_file(file_location, BUCKET, name)

# print(response)

print('File uploaded successfully')

except ClientError as e:

print(e)

# download a file from s3 bucket

def download_file(file_name):

destination = f'download/{file_name}'

try:

s3_client.download_file(BUCKET, file_name, destination)

print('File downloaded successfully')

except ClientError as e:

print(e)

return destination

4.4 Creating a Main file

Now that we have almost everything in place let us create the python script that will contain the endpoints for the user. The file consists of the flask app instance called app and the API resource implementation i.e.

index(): To render the HTML template for the user and display the list of already uploaded filesupload(): To upload the file to the S3 bucketdownload(…): To download the file from the S3 bucket

app.py

import os

from flask import Flask, render_template, request, redirect, send_file

from werkzeug.utils import secure_filename

from s3demo import list_files, upload_file, download_file

app = Flask(__name__)

# pre-requisites -

# 1. in the project directory remember to create the "upload" and "download" folders

# 2. aws iam cli user is already created and have s3 full access policy attached

# 3. aws s3 bucket is already created

# creating the endpoints

# index

@app.route('/', methods=['GET'])

def index():

return render_template('index.html', contents=list_files())

# uploading file to s3

@app.route('/upload', methods=['POST'])

def upload():

f = request.files['file']

if f.filename:

print('Uploading file = {}'.format(f.filename))

# secure_filename function will replace any whitespace provided filename with an underscore

# saving the file in the local folder

f.save(os.path.join('upload', secure_filename(f.filename)))

upload_file(f'upload/{secure_filename(f.filename)}')

else:

print('Skipping file upload op')

return redirect('/')

# downloading file from s3

@app.route('/download/', methods=['GET'])

def download(filename):

print('Downloading file = {}'.format(filename))

output = download_file(filename)

return send_file(output, as_attachment=True)

if __name__ == '__main__':

app.run(debug=False)

5. Application Endpoints

Run the app.py python script. Once the app.py is successfully started it would expose the index endpoint which would render the HTML template on the browser as shown in Fig. 4.

Select any file and click on the Upload button. If everything goes well the file will be successfully uploaded in the S3 bucket and will be shown in the Uploaded Files section as shown in Fig. 5.

We can also verify the same from the S3 bucket.

Once the file is successfully uploaded you can click on the file name to download it. That is all for this tutorial and I hope the article served you with whatever you were looking for. Happy Learning and do not forget to share!

6. Summary

In this tutorial, we learned:

- Introduction to Flask micro-framework in python programming and AWS S3

- Sample program create a web application in python via flask and interact with S3

You can download the source code of this tutorial from the Downloads section.

7. Download the Project

This was a python programming tutorial to create a web app using the flask micro-framework and perform a file upload/download activity via AWS S3.

You can download the full source code of this example here: Develop a Web App with Python Flask and AWS S3