Apache Solr Join Example

This is an article related to the Apache Solr Join. Generally, Apache Solr is used for search and browsing categories and facets.

1. Introduction

Apache Solr is an open-source software java search engine. It is scalable and can process a high volume of data. It is used to index the content and search a huge amount of content. It is a popular search engine. It is used as a document-based NoSQL data source. It can be also used as a key-value store. Solr has JSON, XML, and HTTP REST API.

2. Apache Solr Join

2.1 Prerequisites

Java 7 or 8 is required on the Linux, windows, or Mac operating system. Apache Solr 4.7.0 is required for this example.

2.2 Download

You can download Java 8 can be downloaded from the Oracle website. Apache Solr’s latest releases are available from the Apache Solr website.

2.3 Setup

You can set the environment variables for JAVA_HOME and PATH. They can be set as shown below:

Setup

JAVA_HOME="/desktop/jdk1.8.0_73" export JAVA_HOME PATH=$JAVA_HOME/bin:$PATH export PATH

2.4 How to download and install Apache Solr

Apache Solr’s latest releases are available from the Apache Solr website. After downloading the zip file can be extracted to a folder.

To start the Apache Solr, you can use the command below:

Solr start command

bin/solr start

The output of the above command is shown below:

Solr start command output

apples-MacBook-Air:solr-8.8.2 bhagvan.kommadi$ bin/solr start *** [WARN] *** Your open file limit is currently 2560. It should be set to 65000 to avoid operational disruption. If you no longer wish to see this warning, set SOLR_ULIMIT_CHECKS to false in your profile or solr.in.sh *** [WARN] *** Your Max Processes Limit is currently 1392. It should be set to 65000 to avoid operational disruption. If you no longer wish to see this warning, set SOLR_ULIMIT_CHECKS to false in your profile or solr.in.sh Waiting up to 180 seconds to see Solr running on port 8983 [-] Started Solr server on port 8983 (pid=3054). Happy searching!

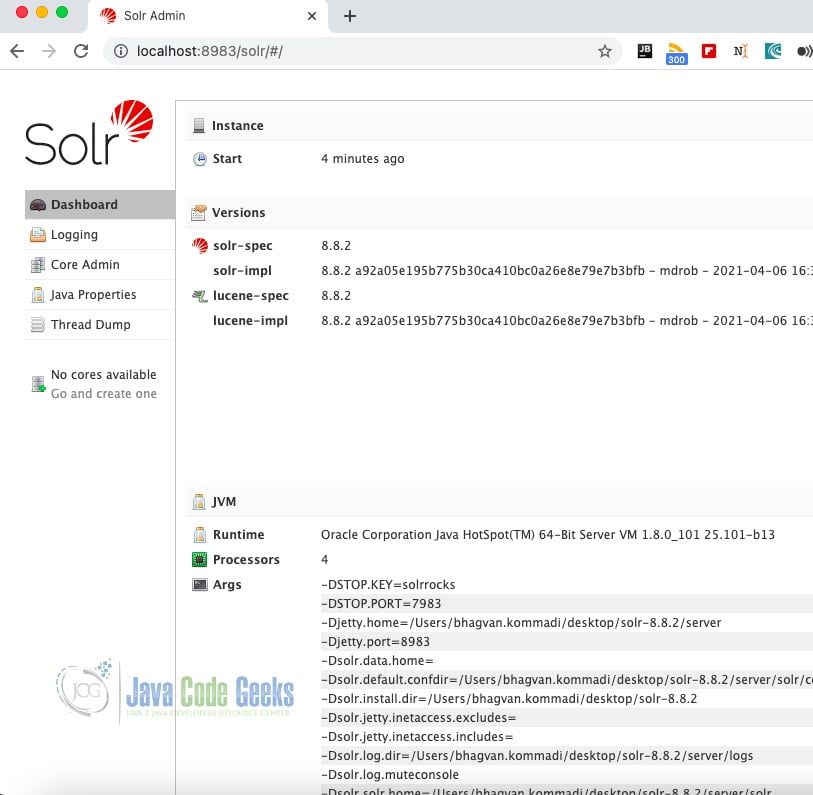

You can access the Solr application from the browse at : http://localhost:8983/solr/. The screenshot below shows the Solr application.

2.5 Apache Solr

Apache Solr merged into Lucene around 2010. Lucene was created by Doug Cutting in 1999. Solr was developed by Yonik Seeley at CNET. Solr had a cloud feature released in 4.0. Solr 6.0 supported parallel SQL queries. Solr is based on Lucene. It has REST API support. It has an inverted index feature to get documents for a query using the search word. The search word is entered by the user to link the documents to the word. Solr has the features such as support for XML/JSON/HTTP, recommendations, automatic load balancing, spell suggestions, autocompletion, geospatial search, authentication, authorization, multilingual keyword search, type ahead prediction, batch processing, streaming, machine learning models, high volume web traffic support, schema, schemaless configuration, faceted search, filtering, and cluster configuration.

2.6 Apache Solr – Join Example

Let us look at a schema first.

schema file

<?xml version="1.0" encoding="UTF-8" ?>

<!-- If you remove this field, you must _also_ disable the update log in solrconfig.xml

or Solr won't start. _version_ and update log are required for SolrCloud

-->

<fields>

<field name="_version_" type="long" indexed="true" stored="true"/>

<!-- points to the root document of a block of nested documents. Required for nested

document support, may be removed otherwise

-->

<field name="_root_" type="string" indexed="true" stored="false"/>

<!-- Only remove the "id" field if you have a very good reason to. While not strictly

required, it is highly recommended. A <uniqueKey> is present in almost all Solr

installations. See the <uniqueKey> declaration below where <uniqueKey> is set to "id".

Do NOT change the type and apply index-time analysis to the <uniqueKey> as it will likely

make routing in SolrCloud and document replacement in general fail. Limited _query_ time

analysis is possible as long as the indexing process is guaranteed to index the term

in a compatible way. Any analysis applied to the <uniqueKey> should _not_ produce multiple

tokens

-->

<field name="id" type="string" indexed="true" stored="true" required="true" multiValued="false" />

<!-- Dynamic field definitions allow using convention over configuration

for fields via the specification of patterns to match field names.

EXAMPLE: name="*_i" will match any field ending in _i (like myid_i, z_i)

RESTRICTION: the glob-like pattern in the name attribute must have

a "*" only at the start or the end. -->

<dynamicField name="*_i" type="int" indexed="true" stored="true"/>

<dynamicField name="*_is" type="int" indexed="true" stored="true" multiValued="true"/>

<dynamicField name="*_s" type="string" indexed="true" stored="true" />

<dynamicField name="*_ss" type="string" indexed="true" stored="true" multiValued="true"/>

<dynamicField name="*_l" type="long" indexed="true" stored="true"/>

<dynamicField name="*_ls" type="long" indexed="true" stored="true" multiValued="true"/>

<dynamicField name="*_t" type="text_general" indexed="true" stored="true"/>

<dynamicField name="*_txt" type="text_general" indexed="true" stored="true" multiValued="true"/>

<dynamicField name="*_en" type="text_en" indexed="true" stored="true" multiValued="true"/>

<dynamicField name="*_b" type="boolean" indexed="true" stored="true"/>

<dynamicField name="*_bs" type="boolean" indexed="true" stored="true" multiValued="true"/>

<dynamicField name="*_f" type="float" indexed="true" stored="true"/>

<dynamicField name="*_fs" type="float" indexed="true" stored="true" multiValued="true"/>

<dynamicField name="*_d" type="double" indexed="true" stored="true"/>

<dynamicField name="*_ds" type="double" indexed="true" stored="true" multiValued="true"/>

<!-- Type used to index the lat and lon components for the "location" FieldType -->

<dynamicField name="*_coordinate" type="tdouble" indexed="true" stored="false" />

<dynamicField name="*_dt" type="date" indexed="true" stored="true"/>

<dynamicField name="*_dts" type="date" indexed="true" stored="true" multiValued="true"/>

<dynamicField name="*_p" type="location" indexed="true" stored="true"/>

<!-- some trie-coded dynamic fields for faster range queries -->

<dynamicField name="*_ti" type="tint" indexed="true" stored="true"/>

<dynamicField name="*_tl" type="tlong" indexed="true" stored="true"/>

<dynamicField name="*_tf" type="tfloat" indexed="true" stored="true"/>

<dynamicField name="*_td" type="tdouble" indexed="true" stored="true"/>

<dynamicField name="*_tdt" type="tdate" indexed="true" stored="true"/>

<!--

<dynamicField name="*_c" type="currency" indexed="true" stored="true"/>

-->

<dynamicField name="ignored_*" type="ignored" multiValued="true"/>

<dynamicField name="attr_*" type="text_general" indexed="true" stored="true" multiValued="true"/>

<dynamicField name="random_*" type="random" />

<!-- uncomment the following to ignore any fields that don't already match an existing

field name or dynamic field, rather than reporting them as an error.

alternately, change the type="ignored" to some other type e.g. "text" if you want

unknown fields indexed and/or stored by default -->

<!--dynamicField name="*" type="ignored" multiValued="true" /-->

<!-- Field to use to determine and enforce document uniqueness.

Unless this field is marked with required="false", it will be a required field

-->

<field name="name" type="text_general" indexed="true" stored="true"/>

<field name="manu" type="text_general" indexed="true" stored="true"/>

<field name="manu_id_s" type="text_general" indexed="true" stored="true"/>

<field name="cat" type="text_general" indexed="true" stored="true" multiValued="true"/>

<field name="features" type="text_general" indexed="true" stored="true" multiValued="true"/>

<field name="weight" type="tdouble" indexed="true" stored="true"/>

<field name="price" type="tdouble" indexed="true" stored="true"/>

<field name="popularity" type="tdouble" indexed="true" stored="true"/>

<field name="store" type="text_general" indexed="true" stored="true"/>

<field name="inStock" type="boolean" indexed="true" stored="true"/>

<field name="manufacturedate_dt" type="text_general" indexed="true" stored="true"/>

</fields>

<uniqueKey>id</uniqueKey>

<types>

<!-- copyField commands copy one field to another at the time a document

is added to the index. It's used either to index the same field differently,

or to add multiple fields to the same field for easier/faster searching. -->

<!--

<copyField source="title" dest="text"/>

<copyField source="body" dest="text"/>

-->

<!-- field type definitions. The "name" attribute is

just a label to be used by field definitions. The "class"

attribute and any other attributes determine the real

behavior of the fieldType.

Class names starting with "solr" refer to java classes in a

standard package such as org.apache.solr.analysis

-->

<!-- The StrField type is not analyzed, but indexed/stored verbatim.

It supports doc values but in that case the field needs to be

single-valued and either required or have a default value.

-->

<fieldType name="string" class="solr.StrField" sortMissingLast="true" />

<!-- boolean type: "true" or "false" -->

<fieldType name="boolean" class="solr.BoolField" sortMissingLast="true"/>

<!-- sortMissingLast and sortMissingFirst attributes are optional attributes are

currently supported on types that are sorted internally as strings

and on numeric types.

This includes "string","boolean", and, as of 3.5 (and 4.x),

int, float, long, date, double, including the "Trie" variants.

- If sortMissingLast="true", then a sort on this field will cause documents

without the field to come after documents with the field,

regardless of the requested sort order (asc or desc).

- If sortMissingFirst="true", then a sort on this field will cause documents

without the field to come before documents with the field,

regardless of the requested sort order.

- If sortMissingLast="false" and sortMissingFirst="false" (the default),

then default lucene sorting will be used which places docs without the

field first in an ascending sort and last in a descending sort.

-->

<!--

Default numeric field types. For faster range queries, consider the tint/tfloat/tlong/tdouble types.

These fields support doc values, but they require the field to be

single-valued and either be required or have a default value.

-->

<fieldType name="int" class="solr.TrieIntField" precisionStep="0" positionIncrementGap="0"/>

<fieldType name="float" class="solr.TrieFloatField" precisionStep="0" positionIncrementGap="0"/>

<fieldType name="long" class="solr.TrieLongField" precisionStep="0" positionIncrementGap="0"/>

<fieldType name="double" class="solr.TrieDoubleField" precisionStep="0" positionIncrementGap="0"/>

<!--

Numeric field types that index each value at various levels of precision

to accelerate range queries when the number of values between the range

endpoints is large. See the javadoc for NumericRangeQuery for internal

implementation details.

Smaller precisionStep values (specified in bits) will lead to more tokens

indexed per value, slightly larger index size, and faster range queries.

A precisionStep of 0 disables indexing at different precision levels.

-->

<fieldType name="tint" class="solr.TrieIntField" precisionStep="8" positionIncrementGap="0"/>

<fieldType name="tfloat" class="solr.TrieFloatField" precisionStep="8" positionIncrementGap="0"/>

<fieldType name="tlong" class="solr.TrieLongField" precisionStep="8" positionIncrementGap="0"/>

<fieldType name="tdouble" class="solr.TrieDoubleField" precisionStep="8" positionIncrementGap="0"/>

<!-- The format for this date field is of the form 1995-12-31T23:59:59Z, and

is a more restricted form of the canonical representation of dateTime

http://www.w3.org/TR/xmlschema-2/#dateTime

The trailing "Z" designates UTC time and is mandatory.

Optional fractional seconds are allowed: 1995-12-31T23:59:59.999Z

All other components are mandatory.

Expressions can also be used to denote calculations that should be

performed relative to "NOW" to determine the value, ie...

NOW/HOUR

... Round to the start of the current hour

NOW-1DAY

... Exactly 1 day prior to now

NOW/DAY+6MONTHS+3DAYS

... 6 months and 3 days in the future from the start of

the current day

Consult the TrieDateField javadocs for more information.

Note: For faster range queries, consider the tdate type

-->

<fieldType name="date" class="solr.TrieDateField" precisionStep="0" positionIncrementGap="0"/>

<!-- A Trie based date field for faster date range queries and date faceting. -->

<fieldType name="tdate" class="solr.TrieDateField" precisionStep="6" positionIncrementGap="0"/>

<!--Binary data type. The data should be sent/retrieved in as Base64 encoded Strings -->

<fieldType name="binary" class="solr.BinaryField"/>

<!-- The "RandomSortField" is not used to store or search any

data. You can declare fields of this type it in your schema

to generate pseudo-random orderings of your docs for sorting

or function purposes. The ordering is generated based on the field

name and the version of the index. As long as the index version

remains unchanged, and the same field name is reused,

the ordering of the docs will be consistent.

If you want different psuedo-random orderings of documents,

for the same version of the index, use a dynamicField and

change the field name in the request.

-->

<fieldType name="random" class="solr.RandomSortField" indexed="true" />

<!-- solr.TextField allows the specification of custom text analyzers

specified as a tokenizer and a list of token filters. Different

analyzers may be specified for indexing and querying.

The optional positionIncrementGap puts space between multiple fields of

this type on the same document, with the purpose of preventing false phrase

matching across fields.

For more info on customizing your analyzer chain, please see

http://wiki.apache.org/solr/AnalyzersTokenizersTokenFilters

-->

<!-- One can also specify an existing Analyzer class that has a

default constructor via the class attribute on the analyzer element.

Example:

<fieldType name="text_greek" class="solr.TextField">

<analyzer class="org.apache.lucene.analysis.el.GreekAnalyzer"/>

</fieldType>

-->

<!-- A text field that only splits on whitespace for exact matching of words -->

<fieldType name="text_ws" class="solr.TextField" positionIncrementGap="100">

<analyzer>

<tokenizer class="solr.WhitespaceTokenizerFactory"/>

</analyzer>

</fieldType>

<!-- A general text field that has reasonable, generic

cross-language defaults: it tokenizes with StandardTokenizer,

removes stop words from case-insensitive "stopwords.txt"

(empty by default), and down cases. At query time only, it

also applies synonyms. -->

<fieldType name="text_general" class="solr.TextField" positionIncrementGap="100">

<analyzer type="index">

<tokenizer class="solr.StandardTokenizerFactory"/>

<filter class="solr.StopFilterFactory" ignoreCase="true" words="stopwords.txt" />

<!-- in this example, we will only use synonyms at query time

<filter class="solr.SynonymFilterFactory" synonyms="index_synonyms.txt" ignoreCase="true" expand="false"/>

-->

<filter class="solr.LowerCaseFilterFactory"/>

</analyzer>

<analyzer type="query">

<tokenizer class="solr.StandardTokenizerFactory"/>

<filter class="solr.StopFilterFactory" ignoreCase="true" words="stopwords.txt" />

<filter class="solr.SynonymFilterFactory" synonyms="synonyms.txt" ignoreCase="true" expand="true"/>

<filter class="solr.LowerCaseFilterFactory"/>

</analyzer>

</fieldType>

<!-- A text field with defaults appropriate for English: it

tokenizes with StandardTokenizer, removes English stop words

(lang/stopwords_en.txt), down cases, protects words from protwords.txt, and

finally applies Porter's stemming. The query time analyzer

also applies synonyms from synonyms.txt. -->

<fieldType name="text_en" class="solr.TextField" positionIncrementGap="100">

<analyzer type="index">

<tokenizer class="solr.StandardTokenizerFactory"/>

<!-- in this example, we will only use synonyms at query time

<filter class="solr.SynonymFilterFactory" synonyms="index_synonyms.txt" ignoreCase="true" expand="false"/>

-->

<!-- Case insensitive stop word removal.

-->

<filter class="solr.StopFilterFactory"

ignoreCase="true"

words="lang/stopwords_en.txt"

/>

<filter class="solr.LowerCaseFilterFactory"/>

<filter class="solr.EnglishPossessiveFilterFactory"/>

<filter class="solr.KeywordMarkerFilterFactory" protected="protwords.txt"/>

<!-- Optionally you may want to use this less aggressive stemmer instead of PorterStemFilterFactory:

<filter class="solr.EnglishMinimalStemFilterFactory"/>

-->

<filter class="solr.PorterStemFilterFactory"/>

</analyzer>

<analyzer type="query">

<tokenizer class="solr.StandardTokenizerFactory"/>

<filter class="solr.SynonymFilterFactory" synonyms="synonyms.txt" ignoreCase="true" expand="true"/>

<filter class="solr.StopFilterFactory"

ignoreCase="true"

words="lang/stopwords_en.txt"

/>

<filter class="solr.LowerCaseFilterFactory"/>

<filter class="solr.EnglishPossessiveFilterFactory"/>

<filter class="solr.KeywordMarkerFilterFactory" protected="protwords.txt"/>

<!-- Optionally you may want to use this less aggressive stemmer instead of PorterStemFilterFactory:

<filter class="solr.EnglishMinimalStemFilterFactory"/>

-->

<filter class="solr.PorterStemFilterFactory"/>

</analyzer>

</fieldType>

<!-- A text field with defaults appropriate for English, plus

aggressive word-splitting and autophrase features enabled.

This field is just like text_en, except it adds

WordDelimiterFilter to enable splitting and matching of

words on case-change, alpha numeric boundaries, and

non-alphanumeric chars. This means certain compound word

cases will work, for example query "wi fi" will match

document "WiFi" or "wi-fi".

-->

<fieldType name="text_en_splitting" class="solr.TextField" positionIncrementGap="100" autoGeneratePhraseQueries="true">

<analyzer type="index">

<tokenizer class="solr.WhitespaceTokenizerFactory"/>

<!-- in this example, we will only use synonyms at query time

<filter class="solr.SynonymFilterFactory" synonyms="index_synonyms.txt" ignoreCase="true" expand="false"/>

-->

<!-- Case insensitive stop word removal.

-->

<filter class="solr.StopFilterFactory"

ignoreCase="true"

words="lang/stopwords_en.txt"

/>

<filter class="solr.WordDelimiterFilterFactory" generateWordParts="1" generateNumberParts="1" catenateWords="1" catenateNumbers="1" catenateAll="0" splitOnCaseChange="1"/>

<filter class="solr.LowerCaseFilterFactory"/>

<filter class="solr.KeywordMarkerFilterFactory" protected="protwords.txt"/>

<filter class="solr.PorterStemFilterFactory"/>

</analyzer>

<analyzer type="query">

<tokenizer class="solr.WhitespaceTokenizerFactory"/>

<filter class="solr.SynonymFilterFactory" synonyms="synonyms.txt" ignoreCase="true" expand="true"/>

<filter class="solr.StopFilterFactory"

ignoreCase="true"

words="lang/stopwords_en.txt"

/>

<filter class="solr.WordDelimiterFilterFactory" generateWordParts="1" generateNumberParts="1" catenateWords="0" catenateNumbers="0" catenateAll="0" splitOnCaseChange="1"/>

<filter class="solr.LowerCaseFilterFactory"/>

<filter class="solr.KeywordMarkerFilterFactory" protected="protwords.txt"/>

<filter class="solr.PorterStemFilterFactory"/>

</analyzer>

</fieldType>

<!-- Less flexible matching, but less false matches. Probably not ideal for product names,

but may be good for SKUs. Can insert dashes in the wrong place and still match. -->

<fieldType name="text_en_splitting_tight" class="solr.TextField" positionIncrementGap="100" autoGeneratePhraseQueries="true">

<analyzer>

<tokenizer class="solr.WhitespaceTokenizerFactory"/>

<filter class="solr.SynonymFilterFactory" synonyms="synonyms.txt" ignoreCase="true" expand="false"/>

<filter class="solr.StopFilterFactory" ignoreCase="true" words="lang/stopwords_en.txt"/>

<filter class="solr.WordDelimiterFilterFactory" generateWordParts="0" generateNumberParts="0" catenateWords="1" catenateNumbers="1" catenateAll="0"/>

<filter class="solr.LowerCaseFilterFactory"/>

<filter class="solr.KeywordMarkerFilterFactory" protected="protwords.txt"/>

<filter class="solr.EnglishMinimalStemFilterFactory"/>

<!-- this filter can remove any duplicate tokens that appear at the same position - sometimes

possible with WordDelimiterFilter in conjuncton with stemming. -->

<filter class="solr.RemoveDuplicatesTokenFilterFactory"/>

</analyzer>

</fieldType>

<!-- Just like text_general except it reverses the characters of

each token, to enable more efficient leading wildcard queries. -->

<fieldType name="text_general_rev" class="solr.TextField" positionIncrementGap="100">

<analyzer type="index">

<tokenizer class="solr.StandardTokenizerFactory"/>

<filter class="solr.StopFilterFactory" ignoreCase="true" words="stopwords.txt" />

<filter class="solr.LowerCaseFilterFactory"/>

<filter class="solr.ReversedWildcardFilterFactory" withOriginal="true"

maxPosAsterisk="3" maxPosQuestion="2" maxFractionAsterisk="0.33"/>

</analyzer>

<analyzer type="query">

<tokenizer class="solr.StandardTokenizerFactory"/>

<filter class="solr.SynonymFilterFactory" synonyms="synonyms.txt" ignoreCase="true" expand="true"/>

<filter class="solr.StopFilterFactory" ignoreCase="true" words="stopwords.txt" />

<filter class="solr.LowerCaseFilterFactory"/>

</analyzer>

</fieldType>

<!-- This is an example of using the KeywordTokenizer along

With various TokenFilterFactories to produce a sortable field

that does not include some properties of the source text

-->

<fieldType name="alphaOnlySort" class="solr.TextField" sortMissingLast="true" omitNorms="true">

<analyzer>

<!-- KeywordTokenizer does no actual tokenizing, so the entire

input string is preserved as a single token

-->

<tokenizer class="solr.KeywordTokenizerFactory"/>

<!-- The LowerCase TokenFilter does what you expect, which can be

when you want your sorting to be case insensitive

-->

<filter class="solr.LowerCaseFilterFactory" />

<!-- The TrimFilter removes any leading or trailing whitespace -->

<filter class="solr.TrimFilterFactory" />

<!-- The PatternReplaceFilter gives you the flexibility to use

Java Regular expression to replace any sequence of characters

matching a pattern with an arbitrary replacement string,

which may include back references to portions of the original

string matched by the pattern.

See the Java Regular Expression documentation for more

information on pattern and replacement string syntax.

http://docs.oracle.com/javase/7/docs/api/java/util/regex/package-summary.html

-->

<filter class="solr.PatternReplaceFilterFactory"

pattern="([^a-z])" replacement="" replace="all"

/>

</analyzer>

</fieldType>

<!-- lowercases the entire field value, keeping it as a single token. -->

<fieldType name="lowercase" class="solr.TextField" positionIncrementGap="100">

<analyzer>

<tokenizer class="solr.KeywordTokenizerFactory"/>

<filter class="solr.LowerCaseFilterFactory" />

</analyzer>

</fieldType>

<!-- since fields of this type are by default not stored or indexed,

any data added to them will be ignored outright. -->

<fieldType name="ignored" stored="false" indexed="false" multiValued="true" class="solr.StrField" />

<!-- This point type indexes the coordinates as separate fields (subFields)

If subFieldType is defined, it references a type, and a dynamic field

definition is created matching *___<typename>. Alternately, if

subFieldSuffix is defined, that is used to create the subFields.

Example: if subFieldType="double", then the coordinates would be

indexed in fields myloc_0___double,myloc_1___double.

Example: if subFieldSuffix="_d" then the coordinates would be indexed

in fields myloc_0_d,myloc_1_d

The subFields are an implementation detail of the fieldType, and end

users normally should not need to know about them.

-->

<fieldType name="point" class="solr.PointType" dimension="2" subFieldSuffix="_d"/>

<!-- A specialized field for geospatial search. If indexed, this fieldType must not be multivalued. -->

<fieldType name="location" class="solr.LatLonType" subFieldSuffix="_coordinate"/>

</types>

</schema>

You can implement this by copying the directory example within solr installation and rename it as joinexample. Ensure that it is a deep copy of the directory. Within the directory, remove the unused directories like example-DIH, multicore,and example-schemaless. Remove the directories under the solr folder except for bin. You can copy the folders and files from the source code ($SOURCE_CODE) provided to solr. You can execute the commands below from the joinexample directory

Solr initial setup

cd $SOLR_INSTALL cp -R example joinexample cd langdetect rm -r example-DIH rm -r multicore cd solr rm -r collection1 cp * $SOURCE_CODE/* .

Note that the core.properties is changed the name from collection to joinexample directory. You can restart Solr from joinexample folder by using the command below:

Solr start command

cd $SOLR_INSTALL/joinexample java -jar start.jar

The output of the command is shown below:

Solr start output

apples-MacBook-Air:joinexample bhagvan.kommadi$ java -jar start.jar

0 [main] INFO org.eclipse.jetty.server.Server – jetty-8.1.10.v20130312

43 [main] INFO org.eclipse.jetty.deploy.providers.ScanningAppProvider – Deployment monitor /Users/bhagvan.kommadi/Desktop/solr-4.7.0/joinexample/contexts at interval 0

55 [main] INFO org.eclipse.jetty.deploy.DeploymentManager – Deployable added: /Users/bhagvan.kommadi/Desktop/solr-4.7.0/joinexample/contexts/solr-jetty-context.xml

1957 [main] INFO org.eclipse.jetty.webapp.StandardDescriptorProcessor – NO JSP Support for /solr, did not find org.apache.jasper.servlet.JspServlet

2070 [main] INFO org.apache.solr.servlet.SolrDispatchFilter – SolrDispatchFilter.init()

2097 [main] INFO org.apache.solr.core.SolrResourceLoader – JNDI not configured for solr (NoInitialContextEx)

2098 [main] INFO org.apache.solr.core.SolrResourceLoader – solr home defaulted to 'solr/' (could not find system property or JNDI)

2101 [main] INFO org.apache.solr.core.SolrResourceLoader – new SolrResourceLoader for directory: 'solr/'

2251 [main] INFO org.apache.solr.core.ConfigSolr – Loading container configuration from /Users/bhagvan.kommadi/Desktop/solr-4.7.0/joinexample/solr/solr.xml

2478 [main] INFO org.apache.solr.core.CoresLocator – Config-defined core root directory: /Users/bhagvan.kommadi/Desktop/solr-4.7.0/joinexample/solr

2491 [main] INFO org.apache.solr.core.CoreContainer – New CoreContainer 1720339

2492 [main] INFO org.apache.solr.core.CoreContainer – Loading cores into CoreContainer [instanceDir=solr/]

2508 [main] INFO org.apache.solr.handler.component.HttpShardHandlerFactory – Setting socketTimeout to: 0

2509 [main] INFO org.apache.solr.handler.component.HttpShardHandlerFactory – Setting urlScheme to: null

2517 [main] INFO org.apache.solr.handler.component.HttpShardHandlerFactory – Setting connTimeout to: 0

2517 [main] INFO org.apache.solr.handler.component.HttpShardHandlerFactory – Setting maxConnectionsPerHost to: 20

2520 [main] INFO org.apache.solr.handler.component.HttpShardHandlerFactory – Setting corePoolSize to: 0

2521 [main] INFO org.apache.solr.handler.component.HttpShardHandlerFactory – Setting maximumPoolSize to: 2147483647

2521 [main] INFO org.apache.solr.handler.component.HttpShardHandlerFactory – Setting maxThreadIdleTime to: 5

2522 [main] INFO org.apache.solr.handler.component.HttpShardHandlerFactory – Setting sizeOfQueue to: -1

2522 [main] INFO org.apache.solr.handler.component.HttpShardHandlerFactory – Setting fairnessPolicy to: false

2826 [main] INFO org.apache.solr.logging.LogWatcher – SLF4J impl is org.slf4j.impl.Log4jLoggerFactory

2827 [main] INFO org.apache.solr.logging.LogWatcher – Registering Log Listener [Log4j (org.slf4j.impl.Log4jLoggerFactory)]

2829 [main] INFO org.apache.solr.core.CoreContainer – Host Name:

3019 [main] INFO org.apache.solr.core.CoresLocator – Looking for core definitions underneath /Users/bhagvan.kommadi/Desktop/solr-4.7.0/joinexample/solr

3031 [main] INFO org.apache.solr.core.CoresLocator – Found core joinexample in /Users/bhagvan.kommadi/Desktop/solr-4.7.0/joinexample/solr/joinexample/

3032 [main] INFO org.apache.solr.core.CoresLocator – Found 1 core definitions

3035 [coreLoadExecutor-4-thread-1] INFO org.apache.solr.core.CoreContainer – Creating SolrCore 'joinexample' using instanceDir: /Users/bhagvan.kommadi/Desktop/solr-4.7.0/joinexample/solr/joinexample

3036 [coreLoadExecutor-4-thread-1] INFO org.apache.solr.core.SolrResourceLoader – new SolrResourceLoader for directory: '/Users/bhagvan.kommadi/Desktop/solr-4.7.0/joinexample/solr/joinexample/'

3089 [coreLoadExecutor-4-thread-1] INFO org.apache.solr.core.SolrConfig – Using Lucene MatchVersion: LUCENE_47

3173 [coreLoadExecutor-4-thread-1] INFO org.apache.solr.core.Config – Loaded SolrConfig: solrconfig.xml

3182 [coreLoadExecutor-4-thread-1] INFO org.apache.solr.schema.IndexSchema – Reading Solr Schema from schema.xml

3233 [coreLoadExecutor-4-thread-1] INFO org.apache.solr.schema.IndexSchema – [joinexample] Schema name=example

3616 [coreLoadExecutor-4-thread-1] INFO org.apache.solr.schema.IndexSchema – unique key field: id

3797 [coreLoadExecutor-4-thread-1] INFO org.apache.solr.core.SolrCore – solr.StandardDirectoryFactory

3805 [coreLoadExecutor-4-thread-1] INFO org.apache.solr.core.SolrCore – [joinexample] Opening new SolrCore at /Users/bhagvan.kommadi/Desktop/solr-4.7.0/joinexample/solr/joinexample/, dataDir=/Users/bhagvan.kommadi/Desktop/solr-4.7.0/joinexample/solr/joinexample/data/

3806 [coreLoadExecutor-4-thread-1] INFO org.apache.solr.core.SolrCore – JMX monitoring not detected for core: joinexample

3820 [coreLoadExecutor-4-thread-1] INFO org.apache.solr.core.CachingDirectoryFactory – return new directory for /Users/bhagvan.kommadi/Desktop/solr-4.7.0/joinexample/solr/joinexample/data

3821 [coreLoadExecutor-4-thread-1] INFO org.apache.solr.core.SolrCore – New index directory detected: old=null new=/Users/bhagvan.kommadi/Desktop/solr-4.7.0/joinexample/solr/joinexample/data/index/

3822 [coreLoadExecutor-4-thread-1] INFO org.apache.solr.core.CachingDirectoryFactory – return new directory for /Users/bhagvan.kommadi/Desktop/solr-4.7.0/joinexample/solr/joinexample/data/index

3920 [coreLoadExecutor-4-thread-1] INFO org.apache.solr.core.SolrCore – no updateRequestProcessorChain defined as default, creating implicit default

3927 [coreLoadExecutor-4-thread-1] INFO org.apache.solr.core.RequestHandlers – created /get: solr.RealTimeGetHandler

3927 [coreLoadExecutor-4-thread-1] INFO org.apache.solr.core.RequestHandlers – adding lazy requestHandler: solr.ReplicationHandler

3929 [coreLoadExecutor-4-thread-1] INFO org.apache.solr.core.RequestHandlers – created /replication: solr.ReplicationHandler

3931 [coreLoadExecutor-4-thread-1] INFO org.apache.solr.core.RequestHandlers – created standard: solr.StandardRequestHandler

3931 [coreLoadExecutor-4-thread-1] INFO org.apache.solr.core.RequestHandlers – adding lazy requestHandler: solr.FieldAnalysisRequestHandler

3931 [coreLoadExecutor-4-thread-1] INFO org.apache.solr.core.RequestHandlers – created /analysis/field: solr.FieldAnalysisRequestHandler

3935 [coreLoadExecutor-4-thread-1] INFO org.apache.solr.core.RequestHandlers – created /update: solr.UpdateRequestHandler

3936 [coreLoadExecutor-4-thread-1] INFO org.apache.solr.core.RequestHandlers – created /admin/: org.apache.solr.handler.admin.AdminHandlers

3950 [coreLoadExecutor-4-thread-1] INFO org.apache.solr.core.RequestHandlers – created /admin/ping: solr.PingRequestHandler

3978 [coreLoadExecutor-4-thread-1] INFO org.apache.solr.handler.loader.XMLLoader – xsltCacheLifetimeSeconds=60

4002 [coreLoadExecutor-4-thread-1] INFO org.apache.solr.core.SolrCore – Hard AutoCommit: disabled

4003 [coreLoadExecutor-4-thread-1] INFO org.apache.solr.core.SolrCore – Soft AutoCommit: disabled

4135 [coreLoadExecutor-4-thread-1] INFO org.apache.solr.core.SolrCore – SolrDeletionPolicy.onInit: commits: num=1

commit{dir=/Users/bhagvan.kommadi/Desktop/solr-4.7.0/joinexample/solr/joinexample/data/index,segFN=segments_1,generation=1}

4136 [coreLoadExecutor-4-thread-1] INFO org.apache.solr.core.SolrCore – newest commit generation = 1

4144 [coreLoadExecutor-4-thread-1] INFO org.apache.solr.search.SolrIndexSearcher – Opening Searcher@1d244bf6[joinexample] main

4163 [searcherExecutor-5-thread-1] INFO org.apache.solr.core.SolrCore – [joinexample] Registered new searcher Searcher@1d244bf6[joinexample] main{StandardDirectoryReader(segments_1:1:nrt)}

4164 [coreLoadExecutor-4-thread-1] INFO org.apache.solr.core.CoreContainer – registering core: joinexample

4166 [main] INFO org.apache.solr.servlet.SolrDispatchFilter – user.dir=/Users/bhagvan.kommadi/Desktop/solr-4.7.0/joinexample

4167 [main] INFO org.apache.solr.servlet.SolrDispatchFilter – SolrDispatchFilter.init() done

4214 [main] INFO org.eclipse.jetty.server.AbstractConnector – Started SocketConnector@0.0.0.0:8983

9953 [qtp519821334-20] INFO org.apache.solr.update.processor.LogUpdateProcessor – [joinexample] webapp=/solr path=/update params={} {add=[EN7800GTX/2DHTV/256M (1705807777788592128), 100-435805 (1705807777838923776)]} 0 100

9977 [qtp519821334-20] INFO org.apache.solr.update.UpdateHandler – start commit{,optimize=false,openSearcher=true,waitSearcher=true,expungeDeletes=false,softCommit=false,prepareCommit=false}

10024 [qtp519821334-20] INFO org.apache.solr.core.SolrCore – SolrDeletionPolicy.onCommit: commits: num=2

commit{dir=/Users/bhagvan.kommadi/Desktop/solr-4.7.0/joinexample/solr/joinexample/data/index,segFN=segments_1,generation=1}

commit{dir=/Users/bhagvan.kommadi/Desktop/solr-4.7.0/joinexample/solr/joinexample/data/index,segFN=segments_2,generation=2}

10025 [qtp519821334-20] INFO org.apache.solr.core.SolrCore – newest commit generation = 2

10043 [qtp519821334-20] INFO org.apache.solr.search.SolrIndexSearcher – Opening Searcher@442ffade[joinexample] main

10045 [qtp519821334-20] INFO org.apache.solr.update.UpdateHandler – end_commit_flush

10046 [searcherExecutor-5-thread-1] INFO org.apache.solr.core.SolrCore – [joinexample] Registered new searcher Searcher@442ffade[joinexample] main{StandardDirectoryReader(segments_2:3:nrt _0(4.7):C2)}

10046 [qtp519821334-20] INFO org.apache.solr.update.processor.LogUpdateProcessor – [joinexample] webapp=/solr path=/update params={commit=true} {commit=} 0 75

16425 [qtp519821334-19] INFO org.apache.solr.update.processor.LogUpdateProcessor – [joinexample] webapp=/solr path=/update params={} {add=[adata (1705807784622161920), apple (1705807784629501952), asus (1705807784630550528), ati (1705807784632647680), belkin (1705807784633696256), canon (1705807784634744832), corsair (1705807784635793408), dell (1705807784635793409), maxtor (1705807784636841984), samsung (1705807784637890560), ... (11 adds)]} 0 17

16452 [qtp519821334-19] INFO org.apache.solr.update.UpdateHandler – start commit{,optimize=false,openSearcher=true,waitSearcher=true,expungeDeletes=false,softCommit=false,prepareCommit=false}

16477 [qtp519821334-19] INFO org.apache.solr.core.SolrCore – SolrDeletionPolicy.onCommit: commits: num=2

commit{dir=/Users/bhagvan.kommadi/Desktop/solr-4.7.0/joinexample/solr/joinexample/data/index,segFN=segments_2,generation=2}

commit{dir=/Users/bhagvan.kommadi/Desktop/solr-4.7.0/joinexample/solr/joinexample/data/index,segFN=segments_3,generation=3}

16478 [qtp519821334-19] INFO org.apache.solr.core.SolrCore – newest commit generation = 3

16482 [qtp519821334-19] INFO org.apache.solr.search.SolrIndexSearcher – Opening Searcher@1d1b7910[joinexample] main

16483 [qtp519821334-19] INFO org.apache.solr.update.UpdateHandler – end_commit_flush

16483 [searcherExecutor-5-thread-1] INFO org.apache.solr.core.SolrCore – [joinexample] Registered new searcher Searcher@1d1b7910[joinexample] main{StandardDirectoryReader(segments_3:5:nrt _0(4.7):C2 _1(4.7):C11)}

16484 [qtp519821334-19] INFO org.apache.solr.update.processor.LogUpdateProcessor – [joinexample] webapp=/solr path=/update params={commit=true} {commit=} 0 32

20593 [qtp519821334-18] INFO org.apache.solr.core.SolrCore – [joinexample] webapp=/solr path=/select params={q={!join+from%3Dmanu_id_s+to%3Did}cat:"graphics+card"} hits=2 status=0 QTime=58

You can post the secondary_products.xml and suppliers.xml file (provided in the source code) from $SOLR_INSTALL/example-docs using the command below.

posting files to solr

apples-MacBook-Air:exampledocs bhagvan.kommadi$ java -Dtype=application/xml -Durl=http://localhost:8983/solr/joinexample/update -jar post.jar secondary_products.xml SimplePostTool version 1.5 Posting files to base url http://localhost:8983/solr/joinexample/update using content-type application/xml.. POSTing file secondary_products.xml 1 files indexed. COMMITting Solr index changes to http://localhost:8983/solr/joinexample/update.. Time spent: 0:00:00.308 apples-MacBook-Air:exampledocs bhagvan.kommadi$ java -Dtype=application/xml -Durl=http://localhost:8983/solr/joinexample/update -jar post.jar suppliers.xml SimplePostTool version 1.5 Posting files to base url http://localhost:8983/solr/joinexample/update using content-type application/xml.. POSTing file suppliers.xml 1 files indexed. COMMITting Solr index changes to http://localhost:8983/solr/joinexample/update.. Time spent: 0:00:00.127 apples-MacBook-Air:exampledocs bhagvan.kommadi$

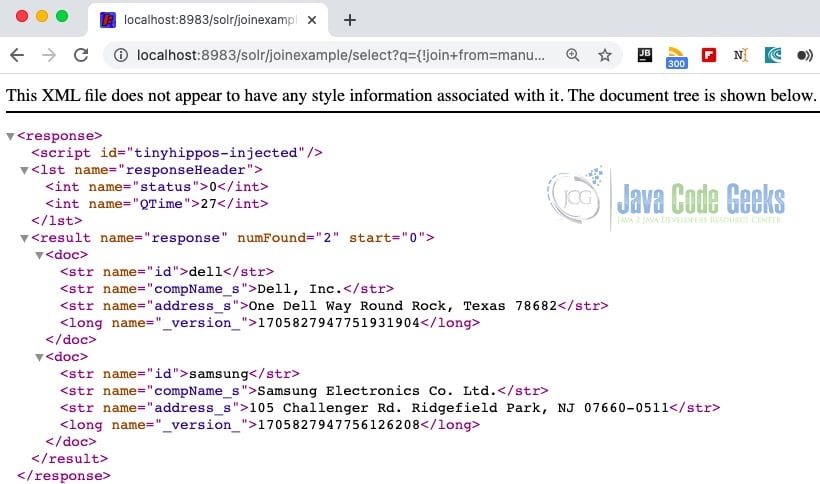

You can launch the browser pointing to http://localhost:8983/solr/joinexample/select?q={!join+from=manu_id_s+to=id}cat:Video. The screenshot below shows the query results in join example core.

The above example is a join Query to find out the details of Video manufacturers. The join QueryParser identifies the {!join} syntax. The Joins are based on Solr’s LocalParam syntax. It is not like SQL syntax. Joins in Solr are like inner queries. The above query is used for finding all video products using the join on the field manufacturer id.

3. Download the Source Code

You can download the full source code of this example here: Apache Solr Join Example